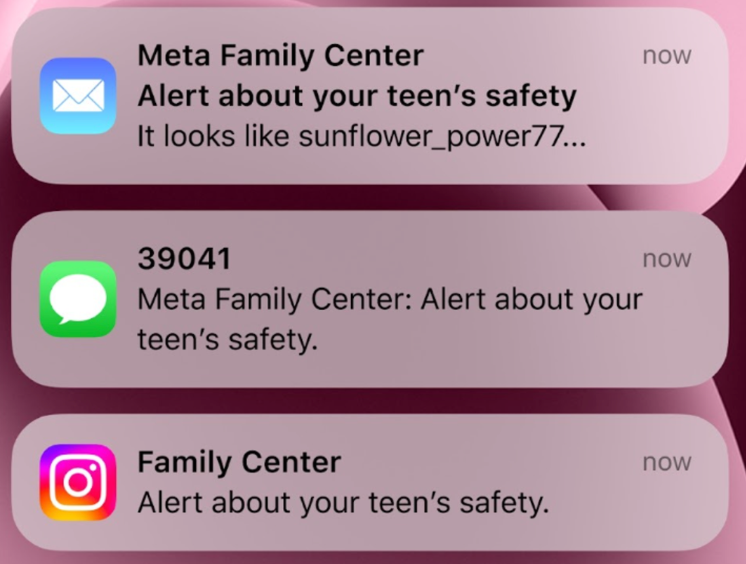

Amid the global movement to regulate the use of social media by children and adolescents, Instagram said it would notify parents if they repeatedly search for words related to suicide or self-harm.

According to Reuters, Instagram said in a statement that it will begin sending notifications to parents who are subscribed to “supervision setting” if their children try to access content related to suicide or self-harm.

Instagram is currently implementing a policy that blocks search terms related to suicide or self-harm and connects users with support organizations. Starting next week, it will start notifying supervisory subscribers in the U.S., the U.K., Australia, and Canada.

In addition, Instagram is asking for parental permission before changing settings for youth accounts under the age of 16.

“This notification is an extension of existing efforts to protect adolescents from potentially harmful content,” Instagram said. “We are applying strict policies to content that promotes or glorifies suicide or self-harm.”

After Australia implemented the world’s first ban on social media by children and adolescents under the age of 16 last year, 13 countries in Europe, including the UK, Denmark and Norway, are also considering or have proposed legislation to ban youth social media.

SAM KIM

US ASIA JOURNAL